Defang Blog

Deploy AI Agents to Any Cloud Account: Open-Sourcing Our Providers and Adding Azure

We're open-sourcing the per-cloud Pulumi providers that power Defang's deployment system and adding Azure to the supported set. One Compose file, one command, any customer's cloud — including managed LLMs, databases, and Redis mapped automatically.

The rise of AI agents is changing how software gets deployed. The old monolith is being replaced by collections of specialized agents and AI-powered services — and many of your customers don't want those running in a shared multi-tenant environment. They want them deployed into their cloud account, under their IAM policies, with their data never leaving their environment.

That means more deployments, across more clouds, with more managed services to wire up. Customer A is on Azure, Customer B is on AWS, Customer C is on GCP. Each one has different IAM, different networking, different compute primitives. And if your agents use LLMs, the differences multiply: Azure AI Foundry, Amazon Bedrock, and Vertex AI each have their own authentication, SDK, request format, and billing model.

Today we're open-sourcing the per-cloud Pulumi providers that power Defang's deployment system and adding Azure to the supported set.

See It Working

Here's a Compose file for an AI agent application with a managed LLM and a managed Postgres database:

Deploy it to AWS:

Same file, deploy to Azure:

No Terraform modules to fork. No cloud-specific service wiring. The x-defang-llm marker tells Defang to map to each cloud's managed LLM service automatically — Bedrock on AWS, Vertex AI on GCP, Azure AI Foundry on Azure. Similarly for databases (Postgres, MongoDB) and caching (Redis). All services are properly networked with service discovery that works just like in Docker Compose.

Every resource is provisioned inside the customer's cloud account using their IAM credentials. Defang's control plane never sees their data or secrets.

Why Open Source

We're releasing the per-cloud providers — the code that translates a Compose definition into cloud-native resources — under the AGPL-3.0 license.

Trust through transparency. When your enterprise customers require you to deploy agents into their cloud account, they want to know exactly what infrastructure you're creating. Open-source providers mean their security team can audit every resource, every IAM role, every network rule before anything is provisioned. This is the code that runs inside their account — they should be able to read it.

Extensibility. Need custom VNet peering? A specific tagging policy? Fork the provider and adapt it. You're not locked into our opinions about networking or resource naming.

Community contribution. AGPL-3.0 is a strong copyleft license: you can use, modify, and distribute the providers, and any modifications to the provider code must also be open-sourced. Improvements flow back to the community.

The providers are Pulumi programs written in Go. Each one maps a Compose definition into cloud-native resources:

- Compute: ECS Fargate on AWS, Cloud Run on GCP, Azure Container Apps

- Databases: RDS on AWS, Cloud SQL on GCP, Azure Database for PostgreSQL (with pgvector enabled)

- Caching: ElastiCache on AWS, Memorystore on GCP, Azure Managed Redis (Enterprise)

- AI/LLM: Amazon Bedrock, Vertex AI, Azure AI Foundry

- Networking: VPCs, subnets, private DNS, and service exposure — automatically configured per cloud

- Identity: IAM roles on AWS, service accounts on GCP, managed identities on Azure

Browse the source: github.com/DefangLabs/pulumi-defang

Azure Preview: What's In, What's Coming

Azure support is launching in preview. Here's what we shipped:

Supported now:

- Container workloads via Azure Container Apps with auto-scaling

- Managed PostgreSQL via Azure Database for PostgreSQL Flexible Server (Burstable tier, pgvector enabled)

- Managed Redis via Azure Managed Redis (Enterprise tier, TLS 1.2)

- LLM integration via Azure AI Foundry (OpenAI-compatible endpoint)

- Container image builds via Azure Container Registry Tasks

- Private networking: VNet with dedicated subnets for Container Apps, PostgreSQL, and Redis with private DNS

On the roadmap:

- OIDC cloud connection for Azure — connect your Azure subscription from the Defang Portal, no manual credential setup

- Resource detail view — browse your Azure resources (Container Apps, databases, Redis) directly in the Portal

- GPU instances for self-hosted model inference

- Custom VNet peering and private endpoints for existing infrastructure

- MongoDB support

We want feedback on what to prioritize. Open a GitHub issue or find us on Discord.

What This Looks Like in Practice

As AI agents and AI-powered services become the norm, the deployment problem is growing fast. A single product might consist of multiple specialized agents, each needing managed inference, databases, and networking — and your customers want all of it running in their own cloud.

If you're an ISV building AI-powered software: You sell an AI document processing agent. Customer A is on Azure, Customer B is on AWS, Customer C is on GCP. Today that means three sets of Terraform modules, three AI service integrations (Azure AI Foundry, Bedrock, Vertex AI), and weeks of onboarding per customer. With Defang, you ship one compose.yaml. One definition, one command, any customer's cloud — including managed inference and databases mapped automatically.

If you're an enterprise platform team: Your compliance team requires all LLM inference to stay within your Azure subscription. Your developers define the agent deployment in Compose and deploy without learning Azure-specific primitives. The open-source providers let your security team audit exactly what Container Apps, PostgreSQL, Redis, and AI Foundry resources are created before anything runs.

Get Started

Clone a sample and deploy it to your cloud account:

Browse the provider source: github.com/DefangLabs/pulumi-defang

Found a bug or want to shape the roadmap? Open a GitHub issue or find us on Discord.

Related posts

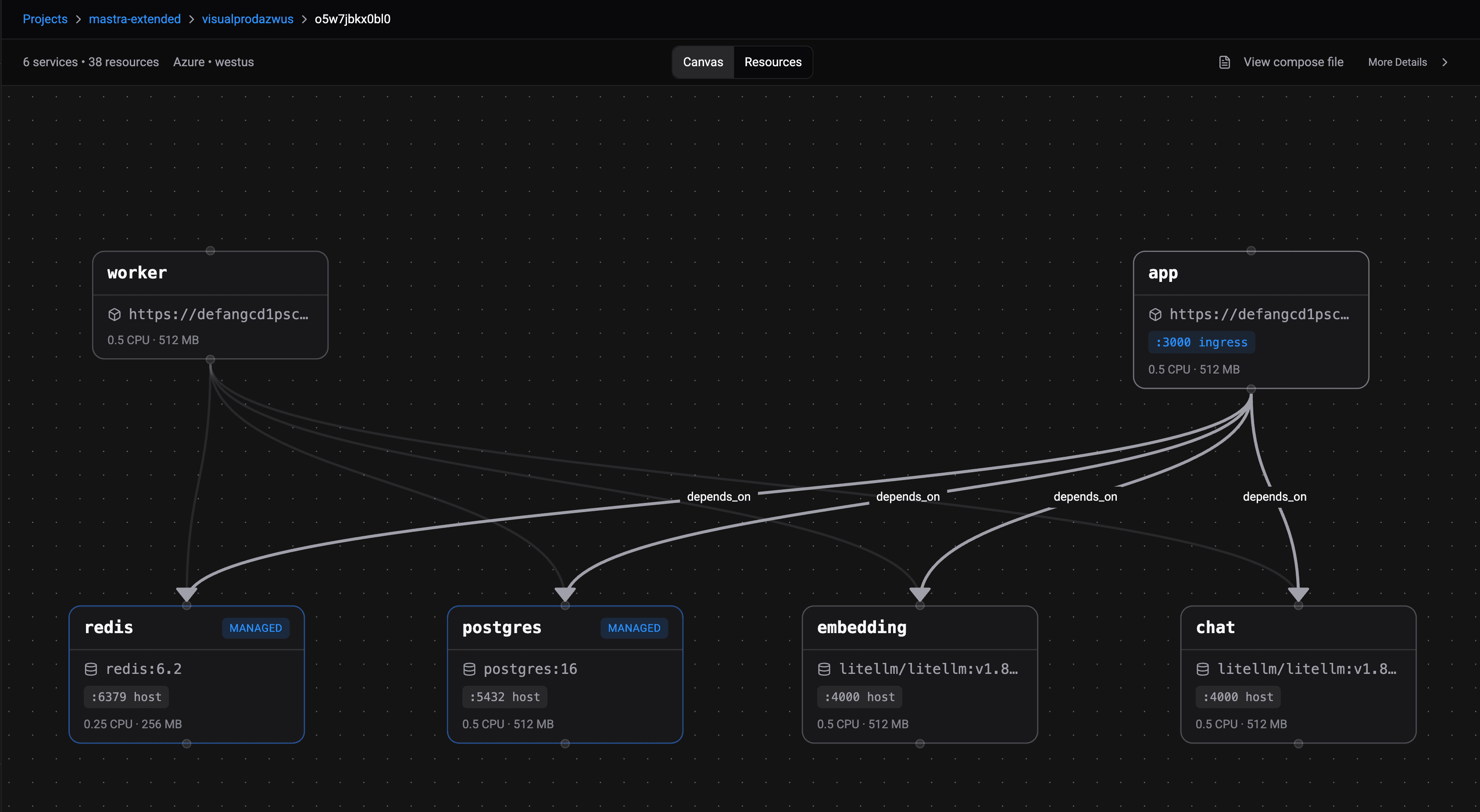

Introducing the Docker Compose Visualizer

Understanding how your multi-service application connects can be challenging. The Docker Compose Visualizer provides an interactive, system-wide view of your deployed architecture, making cloud infrastructure more intuitive and accessible.

An AI Agent That Reads Your Repo and Writes Your Compose File

The Defang Portal now includes an AI-powered Compose file generator. Point it at a GitHub repo, and an agent analyzes your code, reads your dependencies, and produces a production-ready compose.yaml. You can also watch the work happen in real time.

Your Coding Agent Just Learned to Deploy

Defang now ships skills for Claude Code and Codex. Type /defang:deploy in your AI coding agent and it handles CLI setup, authentication, stack creation, config, and deployment, step by step, inside your editor.